Abstract

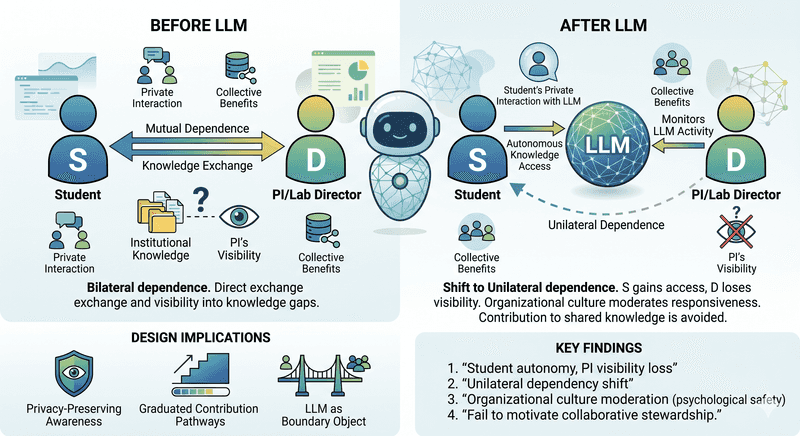

Large Language Models (LLMs) are increasingly embedded in collaborative workflows, yet their structural effects on the relationships between human stakeholders in data-intensive knowledge work remain underexplored. In this position paper, we reinterpret findings from a month-long field deployment of an LLM-based chatbot that mediates organizational memory across four university research labs (N=21) through the lens of Interdependence Theory. Our analysis reveals two key dynamics. First, the LLM redistributes the dependence structure between students and lab directors: while students gain autonomous access to institutional knowledge, directors lose visibility into knowledge gaps, shifting bilateral dependence toward unilateral dependence. This redistribution is moderated by organizational culture, amplifying mutual responsiveness in psychologically safe environments while dampening it where students default to private interaction. Second, the system fails to support the transformation of motivation needed for collaborative knowledge stewardship: students consistently avoid contributing to documentation due to role perceptions and temporal asymmetry between individual costs and collective benefits. We derive design implications including privacy-preserving awareness mechanisms, graduated contribution pathways, and designing for the LLM's dual role as a boundary object across stakeholder groups.